AI News | Editor: Sandy

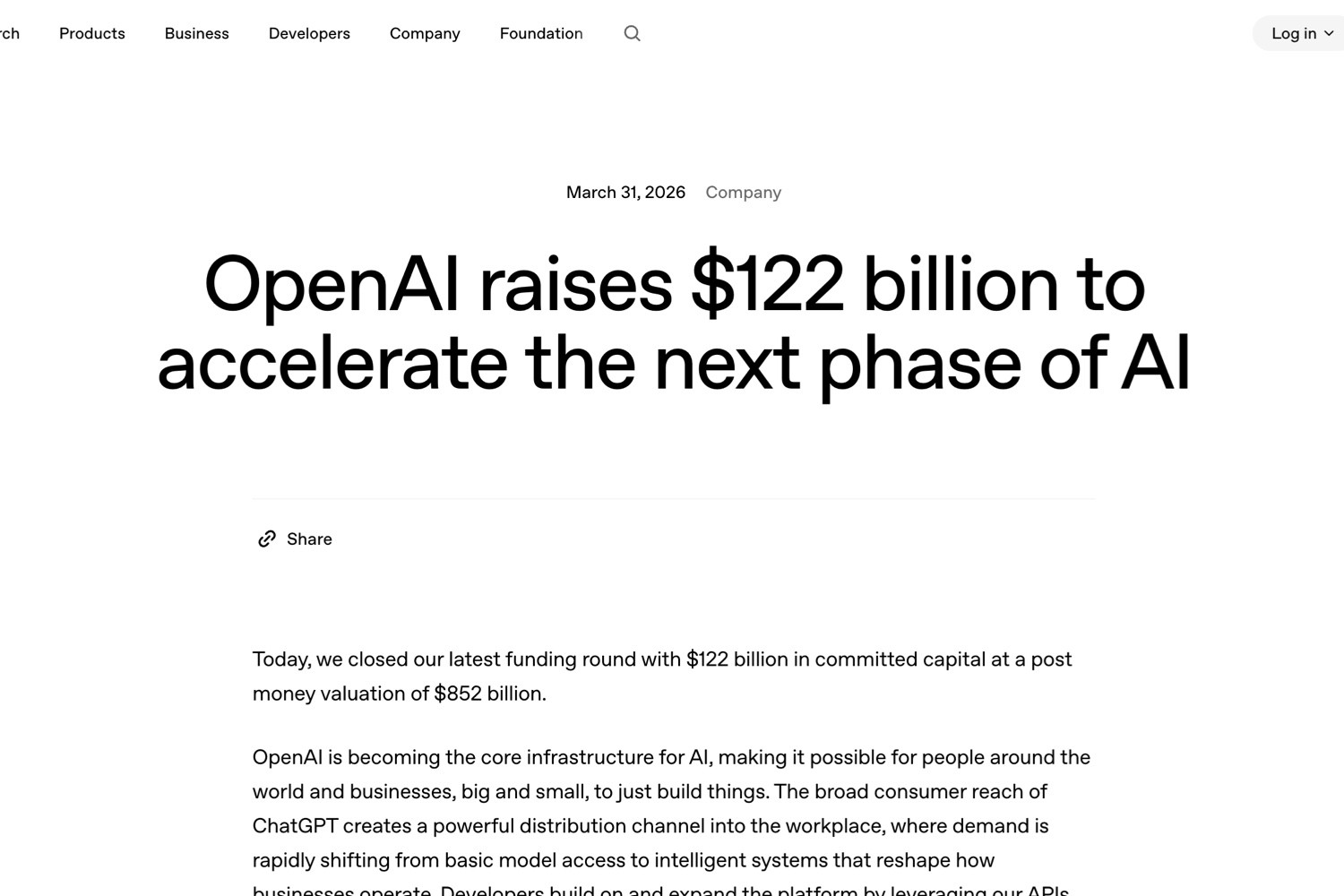

On April 22, 2026, OpenAI introduced ChatGPT workspace agents, pushing its enterprise version of ChatGPT from the role of a conversational assistant toward something closer to a digital colleague. According to OpenAI’s official announcement, “Introducing workspace agents in ChatGPT” (https://openai.com/zh-Hant/index/introducing-workspace-agents-in-chatgpt/), the feature is now available in research preview for ChatGPT Business, Enterprise, Edu, and Teachers plans at no additional cost until May 6, 2026, after which it will shift to a credit-based pricing model. Its central promise is straightforward: employees can describe a job, and ChatGPT can help build an agent that is shareable across teams, connected to tools, capable of remembering workflows, and designed to improve over time.

This is not the first time OpenAI has discussed GPTs or customised AI tools. What is different this time is that workspace agents are no longer merely personal prompt wrappers, knowledge bases, or chat interfaces. OpenAI describes them as an evolution of GPTs, powered by Codex and able to run in the cloud, handling multi-step tasks such as drafting reports, writing code, responding to messages, organising data, producing presentations, updating CRM systems, and creating tickets. In other words, ChatGPT is moving from being a text-based entry point for white-collar work into the execution layer of enterprise processes. This marks a crucial turn in the commercialisation of generative AI: models still matter, but the value that enterprises are willing to purchase, govern, and measure increasingly comes from whether those models can safely enter organisational workflows.

From GPTs to Workspace Agents: The Difference Lies Not in Chat, but in Governed Action

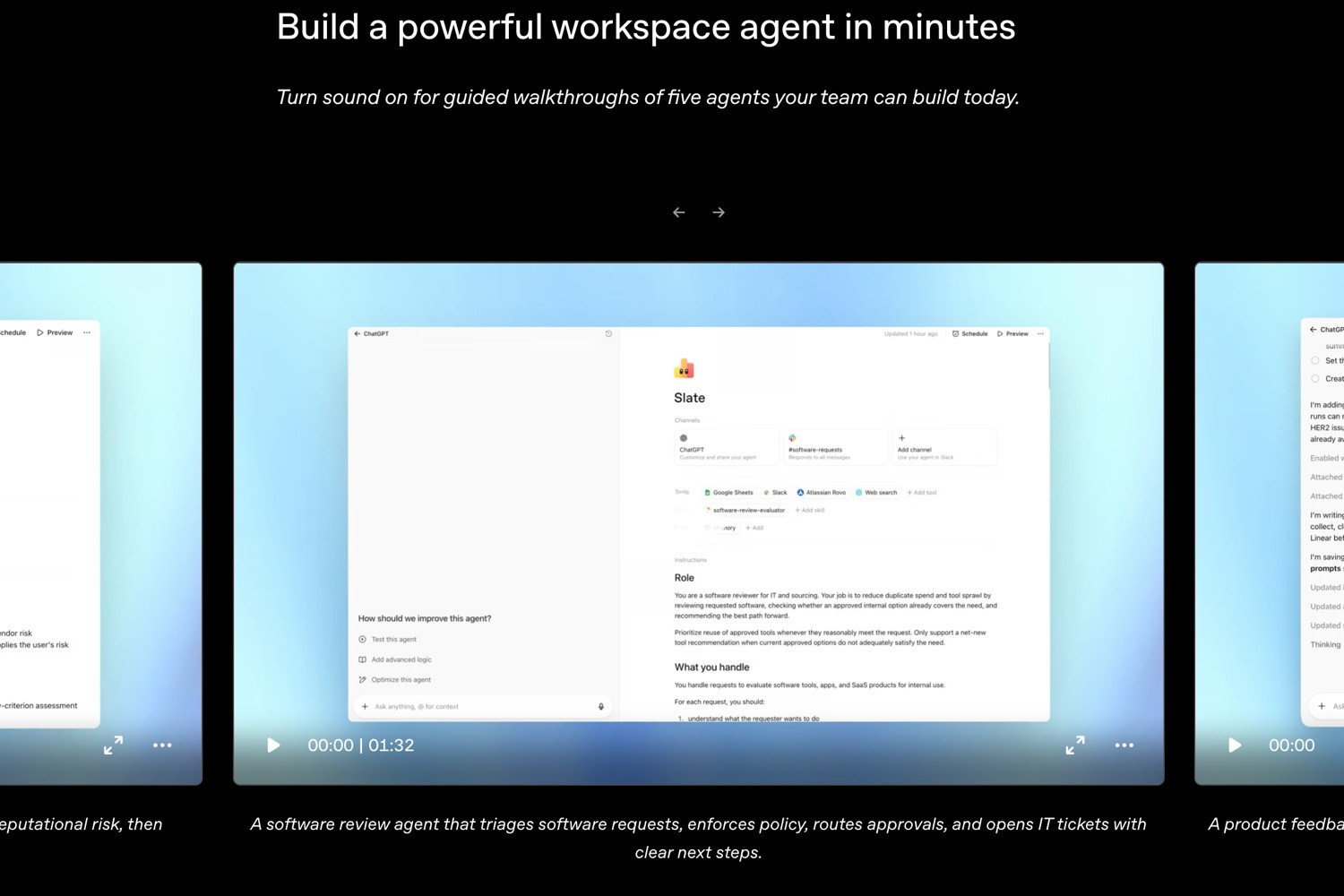

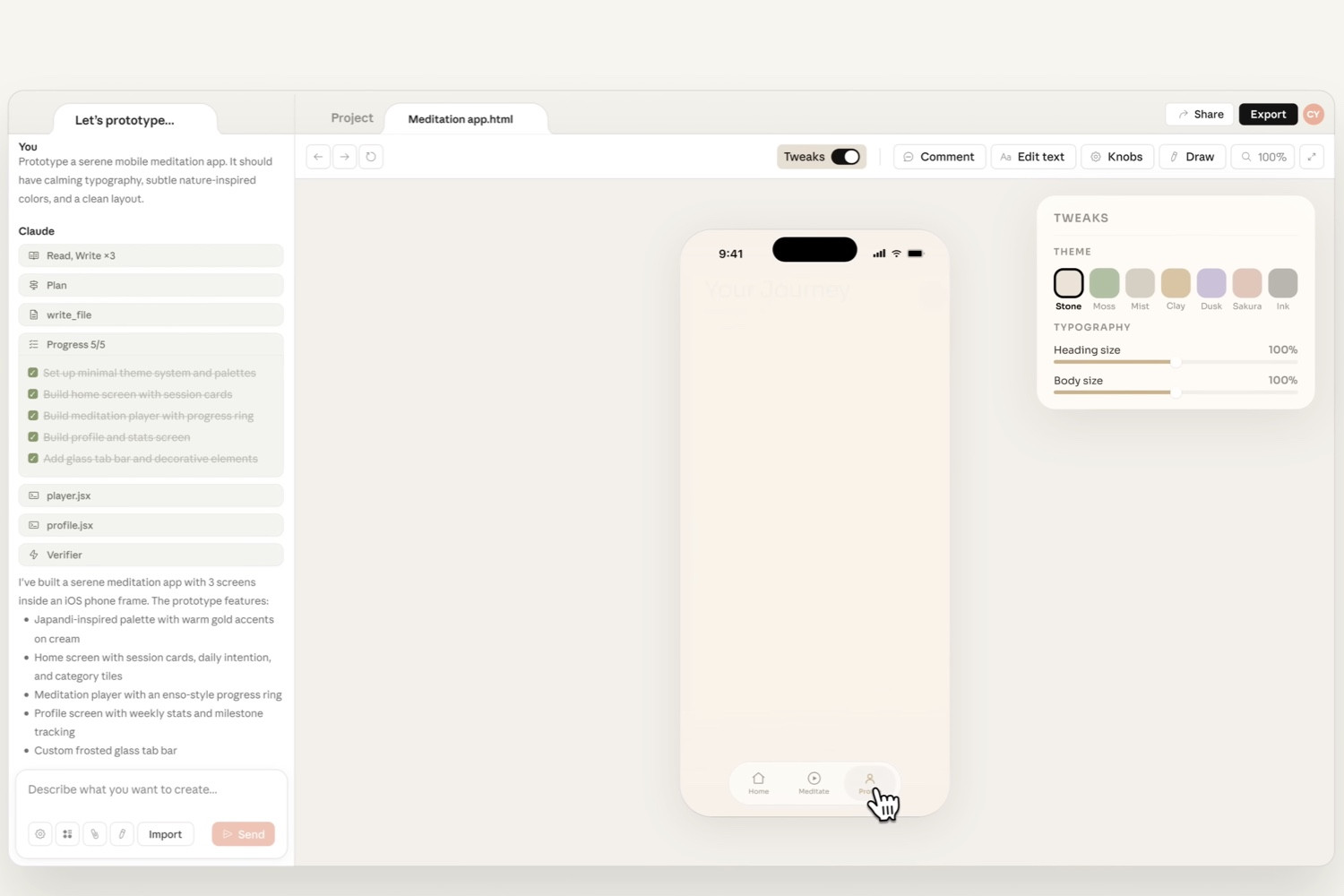

The product logic behind OpenAI’s latest release is to turn into a commercial feature what many companies have already been experimenting with internally: automated, AI-assisted workflows. The announcement says users can describe the work they want done, or upload files, and ChatGPT will help define the steps, connect suitable tools, add skills, and iterate through testing until the agent meets the need. OpenAI’s examples include reviewing software access requests, triaging product feedback, generating weekly metrics reports, prospecting sales leads, and managing third-party risk. These tasks share a common pattern: they have traditionally required people to switch across multiple systems, read documents, retrieve data, write summaries, decide the next step, and then send the result to Slack, email, a CRM, or a ticketing system.

What makes workspace agents notable is not that they can generate more polished text, but that they are designed as executable, shareable, and monitorable units of work. OpenAI says agents can access a workspace that includes files, code, tools, and memory; they can write or run code, use connected applications, remember what they have learned, and continue tasks across multiple steps. They can also be used by teams in ChatGPT and Slack, with support for more surfaces planned. This design points to a broader industry trend: the AI race is no longer only about which model gives the cleverest answer, but which company can insert models into the seams of daily enterprise work so they can complete more background labour without disrupting human workflows.

Governance is just as central to the announcement. OpenAI emphasises that organisations can control which data and tools agents can use, which actions they can perform, and which sensitive steps require approval, such as editing spreadsheets, sending emails, or creating calendar events. Enterprise and Edu administrators can also use role-based permissions to control who may create, share, and use agents, while monitoring agent settings, updates, and runs through the Compliance API. These measures may appear cautious, but they are essential for broader enterprise adoption. If agents are to be treated as a new form of digital labour inside companies, they cannot merely be intelligent. They must also be auditable, interruptible, and accountable.

The Enterprise Evolution of Codex: From Writing Code to Orchestrating Knowledge Work

OpenAI describes workspace agents as being powered by Codex, a detail that reveals the product’s technical direction. Codex was initially best known for helping developers write and understand code. By placing it inside a broader enterprise workspace, OpenAI is extending the ability to “programmatically handle tasks” beyond engineering departments. When an AI agent needs to read data, call tools, execute code, inspect outputs, and determine the next step, coding capability is no longer just a specialist skill for building software. It becomes infrastructure for reliable AI action.

This also explains why OpenAI’s workspace agents are not simply an upgraded chatbot. Enterprise workflows are rarely single-turn question-and-answer exchanges. They tend to involve long chains of conditions, exceptions, permissions, and handoffs. A sales team handling prospects, for example, does not merely need a follow-up email. It may also need lead scoring, research, call-note summaries, CRM updates, and posts to team channels. In the announcement, OpenAI cites early feedback from Rippling, saying that its account executives were able to build, evaluate, and refine a sales opportunity agent without relying on engineering teams, turning what had previously taken five to six hours per week of data preparation into background automation. If such cases can be replicated at scale, AI will move beyond “improving individual productivity” and begin to reshape departmental processes.

Still, there is a considerable distance between technical demonstration and stable commercial deployment. Enterprise workflows are filled with exceptions, and data sources are often incomplete, inconsistent, or entangled with complex permissions. For an agent to run in the cloud for extended periods, it needs not only strong model reasoning, but also reliable state management, error recovery, logging, and security boundaries. OpenAI says built-in safeguards are designed to help agents continue following user instructions when they encounter misleading external content, such as prompt-injection attacks. That acknowledgement matters. In enterprise markets, the central concern is not whether AI can complete eight out of ten tasks. It is what happens during the other two, when failure may carry costs the organisation cannot easily absorb.

International Competition: American Tech Giants Are Turning AI Agents Into Enterprise Gateways

OpenAI’s announcement is not an isolated event. It is part of a broader contest among American enterprise AI platforms. Microsoft has already positioned Copilot Studio as a tool for companies to build AI agents. According to Microsoft’s official page, “Microsoft Copilot Studio” (https://adoption.microsoft.com/en-us/ai-agents/copilot-studio/), AI agents can range from simple question-and-answer assistants to autonomous agents that execute entire workflows end to end, while companies can build, govern, and secure agents through Microsoft 365 Copilot, Azure AI Foundry, and Copilot Studio. Microsoft’s advantage lies in its existing enterprise software estate: Outlook, Teams, SharePoint, Dynamics, and Azure are natural distribution channels.

Google is approaching the opportunity from search, cloud, and model infrastructure. According to Google Cloud’s official blog post, “Google Agentspace enables the agent-driven enterprise” (https://cloud.google.com/blog/products/ai-machine-learning/google-agentspace-enables-the-agent-driven-enterprise), Google Agentspace emphasises helping employees discover and adopt agents quickly, while allowing them to build agents with no-code tools that combine enterprise search, Chrome Enterprise, and Google’s existing Workspace ecosystem. Google’s strategy is to bind agents to enterprise knowledge retrieval, so that AI does not merely generate text but helps employees make sense of sprawling internal information. For large organisations, this may create both overlap and differentiation with OpenAI’s workspace agents: OpenAI stresses conversational workspaces and task execution, while Google leans more heavily on search, cloud platforms, and model infrastructure.

Salesforce, meanwhile, embeds agents directly into customer relationship management and business workflows. According to Salesforce’s official press release, “Salesforce’s Agentforce Is Here: Trusted, Autonomous AI Agents to Scale Your Workforce” (https://www.salesforce.com/uk/news/press-releases/2024/10/29/agentforce-general-availability-announcement/), Agentforce provides customisable autonomous AI agents that connect to enterprise data and take action across sales, service, marketing, and commerce. This reflects another route: rather than starting from a general chat entry point, Salesforce begins with specific enterprise applications and data models, bringing AI closer to measurable revenue, service, and marketing outcomes.

China’s market may follow a different rhythm. Baidu, Alibaba, Tencent, ByteDance, and others are all advancing AI tools across enterprise models, office suites, cloud services, and customer-service automation. But Chinese enterprise adoption is heavily shaped by data compliance, private deployment, and vertical industry scenarios. Compared with American giants, which tend to emphasise agents across SaaS ecosystems, China’s enterprise market may lean more toward combinations of cloud vendors, industry solution providers, and large platforms. Europe introduces yet another variable. The AI Act and data-protection regimes will raise compliance costs for companies adopting autonomous agents, but they may also push suppliers to make auditability, explainability, and human oversight standard features earlier. These regional differences show that the global race in AI agents is not simply a contest among products. It is a compound game involving cloud infrastructure, office ecosystems, regulation, and corporate culture.

Business Models Are Shifting: From Seat Subscriptions to Metered Digital Labour

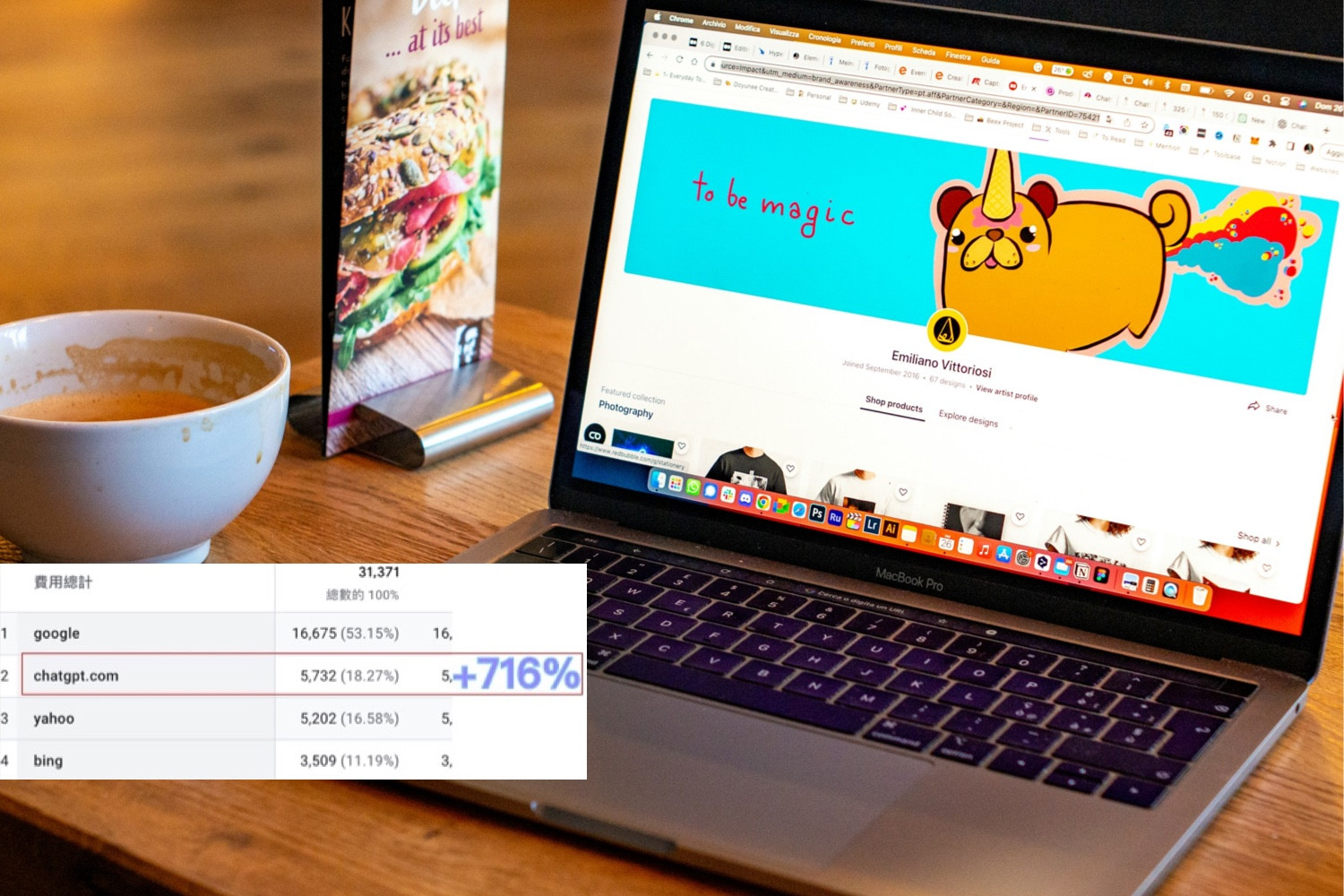

OpenAI’s decision to make workspace agents free until May 6, 2026, and then move to credit-based pricing is an important detail. Traditional SaaS software has usually been priced by seat, with companies paying for accounts on a per-user basis. Early generative AI products often followed a similar pattern. But if workspace agents can run for extended periods, execute across tools, and complete concrete tasks, both their costs and their value begin to resemble workload rather than headcount. Credit-based pricing suggests that OpenAI may be testing a new model that sits somewhere between cloud computing and digital labour: enterprises pay for the model inference, tool calls, and computing resources consumed by agents as they perform tasks.

That will change how companies calculate AI purchases. If an agent can save a sales team several hours each week by preparing data, procurement departments can compare its cost against labour costs, conversion rates, or response speed. If an agent merely generates the occasional summary, willingness to pay will be far lower. In the future, AI companies may advertise not only model accuracy or context-window size, but also agent task-completion rates, hours saved, error rates, approval wait times, and cross-system success rates. This metric-driven shift could benefit OpenAI by turning frequent ChatGPT use into measurable enterprise workflow spending. It also creates pressure, because companies will demand clearer returns on investment.

Industry Impact: AI Begins to Rewrite the Organisation of White-Collar Work

The medium- and long-term impact of workspace agents may not lie in whether one employee writes fewer emails. It may lie in how companies redesign work itself. Many organisational processes have long depended on humans acting as glue between systems: copying and pasting across tools, compiling data, checking status, and tracking decisions. These tasks are often not regarded as core creative work, yet they consume a substantial amount of time. If agents can reliably take over this coordination and preparation work, companies may redefine the boundaries of roles in junior analysis, operations, customer support, sales support, finance close processes, and education administration.

Yet this will also create new management problems. The first is agent sprawl. If every team can build its own agents, companies may quickly find themselves with large numbers of overlapping, inconsistent, and poorly governed agents. OpenAI’s agent library, usage analytics, and administrative controls are designed to respond to this risk. The second is knowledge ossification. If agents encode a team’s current best practice into a workflow, they may improve consistency in the short term while making the organisation too dependent on old processes in the long term, reducing sensitivity to exceptions and innovation. The third is accountability. When an agent drafts an email, updates data, or creates a ticket, responsibility for mistakes will need to be clarified among builders, users, administrators, and vendors through company policy and legal practice.

For employees, agents are not simply either a threat or a benefit. They may reduce tedious work and free people to focus on higher-value judgment. They may also accelerate the pace of work and raise managerial expectations for output. Historically, office automation has rarely made work disappear; more often, it has redistributed tasks and changed the threshold of skills required. If workspace agents become widespread, companies will need more than employees who know how to write prompts. They will need people who understand processes, data, risk, and how to evaluate the quality of AI output. These “workflow designers” may become central to the next phase of enterprise AI adoption.

An Open Ending: OpenAI’s Opportunity and Burden Grow Together

OpenAI’s launch of workspace agents shows that its enterprise strategy is moving from models and chat products toward workflow platforms. The company is not merely competing for the amount of time employees spend inside ChatGPT each day. It is competing for repeatable, measurable, and automatable segments of internal enterprise work. That will bring OpenAI into more direct competition with Microsoft, Google, Salesforce, and cloud and SaaS providers around the world. It will also force the company to confront the toughest requirements of enterprise markets: reliability, security, governance, predictable costs, and clear accountability.

If workspace agents can prove that they are more than a novelty, and that they can continuously save time, reduce friction, and improve decision quality across sales, finance, engineering, customer service, and education, enterprise AI adoption may accelerate again. If they encounter barriers in permissions, errors, integration, and cost, the market may shift quickly from enthusiasm to caution. Either way, this release sends a clear signal: the next contest in generative AI will not be about who can answer questions best, but who can be trusted to get work done.