As generative artificial intelligence moves beyond chatbots, summaries and search, and deeper into the routines of office work and knowledge labour, the competitive frontier is no longer defined simply by who has the largest models or the most computing power. It is increasingly shaped by who can obtain the richest and most realistic data about how work is actually done. From that perspective, Meta’s decision to require tracking software on the computers of its American employees—capturing mouse movements, clicks, keystrokes and occasional screenshots—is not merely another story about corporate surveillance. It is a sign that digital capitalism is entering a new phase, one in which firms seek to convert the ordinary flow of human work into training data for artificial intelligence.

What makes this shift significant is that it unsettles a boundary that once seemed relatively clear: the line between labour and data. In the past, companies purchased employees’ time, skills and output. Now, as AI systems become better at imitating the practical mechanics of work—navigating interfaces, using shortcuts and carrying out multi-step digital tasks—companies are also seeking access to something more strategically valuable: the process by which workers complete those tasks. The real question is therefore not simply whether monitoring is taking place, but who owns the data generated by work itself, and how that ownership reshapes bargaining power inside the firm.

On the Surface, This Looks Like Surveillance; in Substance, It Is the Datafication of Labour

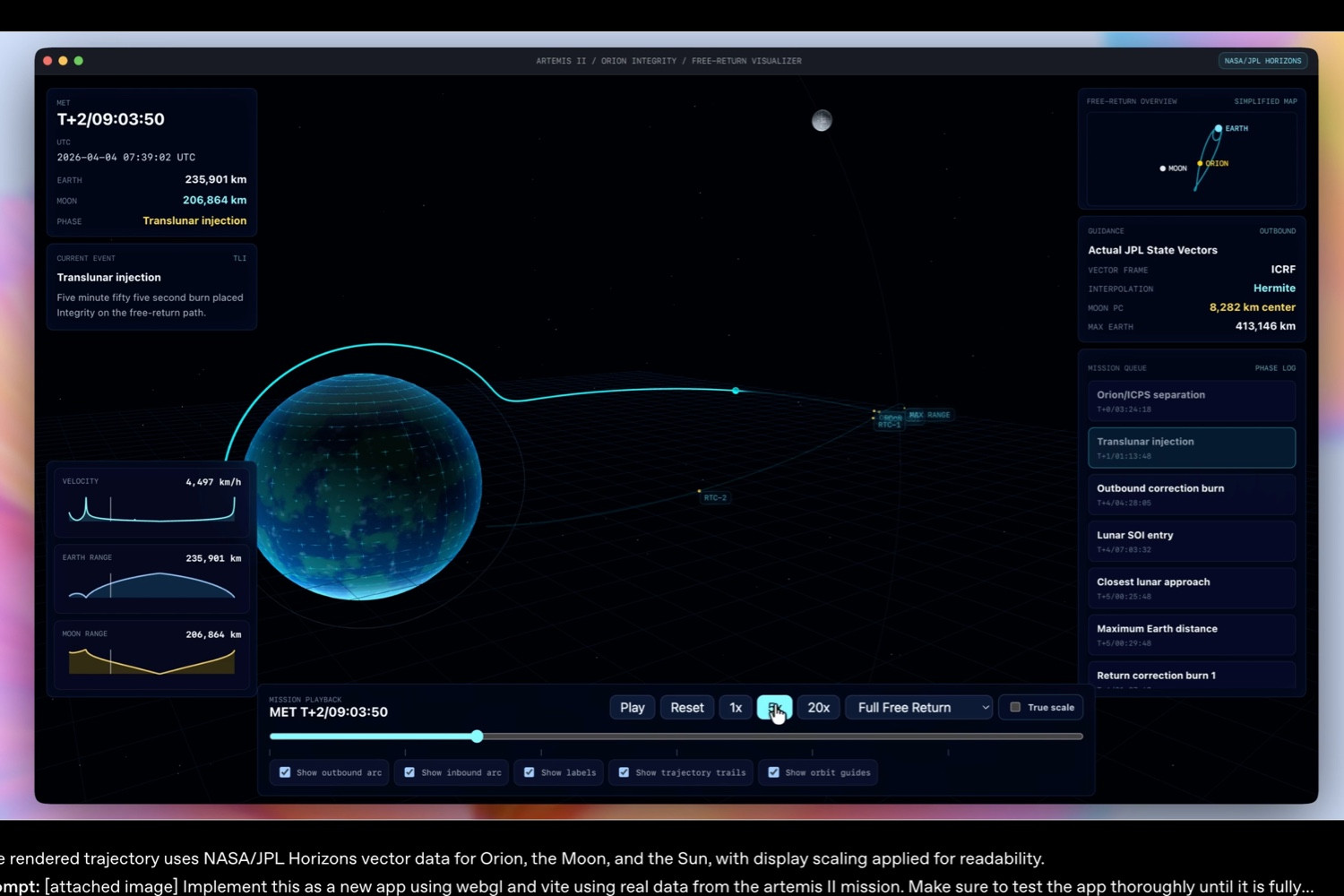

According to the report in The Times of India, Meta has informed its US employees that it will begin collecting usage behaviour across specific work applications and websites in order to help train AI models. The aim is to improve the models’ ability to perform the sorts of computer-based tasks at which AI still tends to struggle, such as navigating dropdown menus, following click paths and using keyboard shortcuts reliably. Reuters, in its report “Meta to start capturing employee mouse movements, keystrokes for AI training data” (https://www.reuters.com/sustainability/boards-policy-regulation/meta-start-capturing-employee-mouse-movements-keystrokes-ai-training-data-2026-04-21/), added that the internal programme is known as the Model Capability Initiative. The data, Reuters reported, will be used to train AI agent systems that can autonomously perform workplace tasks, while Meta has said the information will not be used for performance evaluation and is intended only for model training.

Placed in the context of Meta’s recent organisational direction, the move is highly consistent. Over the past two years, the company has steadily expanded AI from a product feature into an organising logic for internal operations, while framing efficiency, streamlining and automation as central to corporate restructuring. In other words, this is not simply an expansion of workplace oversight. It is a deeper attempt to rewrite the production process itself: to break down forms of digital work that have so far depended on skilled employees into observable, labelable and repeatable samples for machine learning. Employees, in this setting, are not merely workers. They are becoming suppliers of training material within the model-development pipeline.

That changes the nature of the issue. Traditional workplace monitoring has typically been justified in terms of supervision, compliance or security. Meta’s initiative links monitoring directly to AI capability-building. The company is not merely trying to see what employees are doing; it is trying to learn how they do it, so that systems may eventually reproduce part of that behaviour. In economic terms, this resembles the conversion of tacit skill and procedural knowledge into an explicit asset, one that can be absorbed into capital-intensive technical systems and scaled far beyond the individual worker.

The Real Question Is Who Controls the Power to Model Workflows

The deeper concern raised by this story is not limited to employee privacy. It concerns the redistribution of power between corporate governance, data ownership and labour. Once a company can extract detailed interaction traces from everyday office software and train AI agents on them, the ownership of workplace knowledge begins to shift from individuals and teams to the platform and the model. Much of this knowledge has historically been difficult to measure clearly. It resides in exception-handling, judgement calls, sequencing, switching between windows and the small corrections that experience makes possible. Once it is captured in granular form, however, the company may gain the capacity to automate it.

That is why Meta’s approach matters structurally. The company is not merely teaching AI to click buttons on a screen. It is moving closer to a managerial ideal in which systems take over high-frequency, standardisable forms of knowledge work, while human employees move into roles centred on oversight, intervention and quality control. Reuters, in the report cited above, described Meta’s internal direction as one in which “agentic systems” would increasingly execute work while humans guide, review and improve their output. On the surface, that sounds like an upgraded division of labour. In practice, it may also imply a profound redefinition of what many mid-level white-collar jobs are worth.

The traditional tension between capital and labour reappears here in a new form. In the industrial era, the main struggles concerned hours, output and wages. In the platform era, they centred on data and distribution. In the age of AI, the conflict increasingly concerns control over process data and over the degree to which a task can be automated. If firms can collect that data at scale, the result may be more than a better product. It may become a structural advantage: an enhanced ability to redesign workflows, reduce headcount, redraw job categories and alter the boundary between internal work and outsourcing.

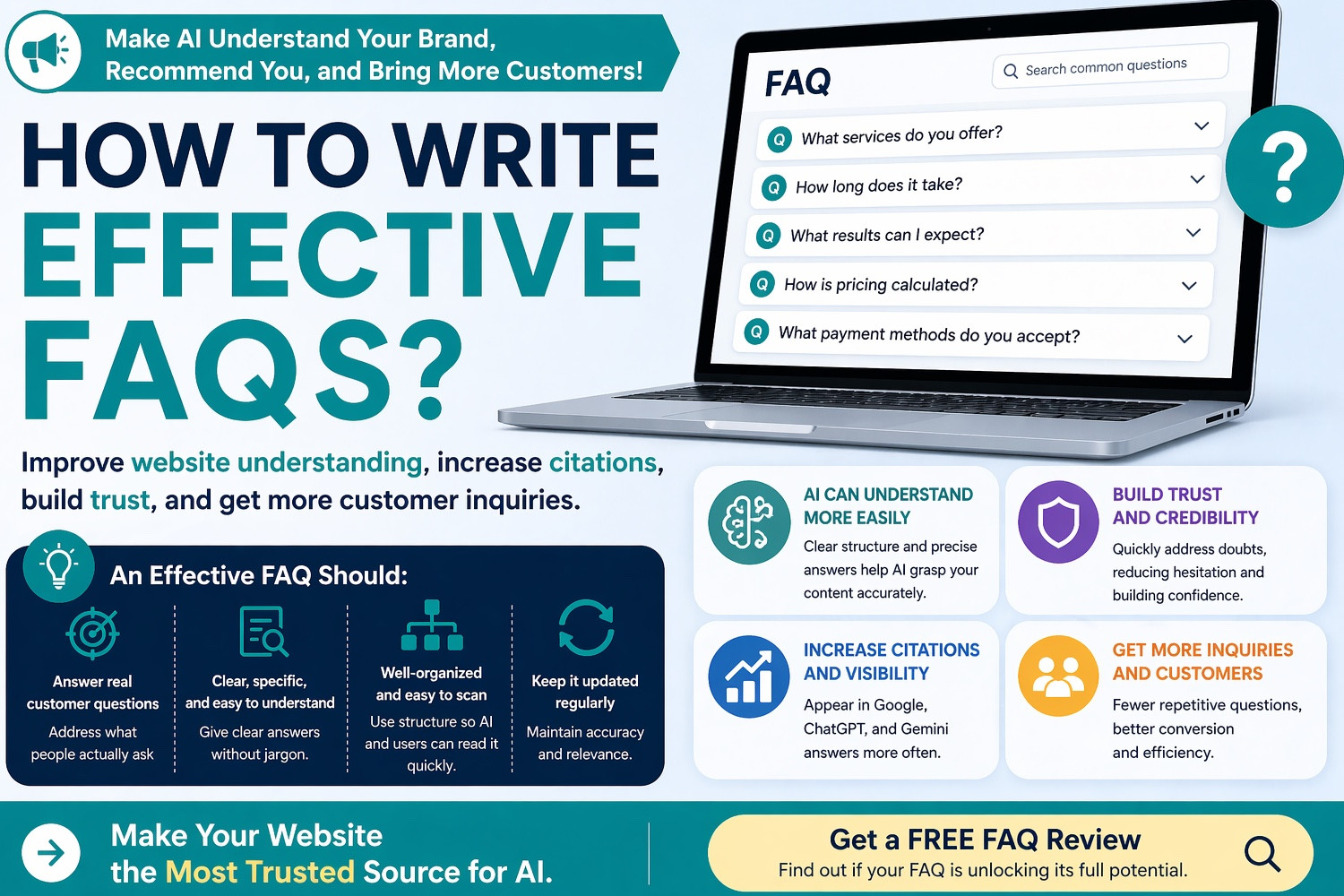

The United States, Europe and Canada Reflect Different Regulatory Philosophies

It is telling that Meta is beginning this initiative in the United States rather than launching it globally from the outset. That alone suggests how important institutional context has become. In the United States, privacy and labour protections remain fragmented, often divided across sectors and states rather than governed by a single comprehensive federal framework. The US Federal Trade Commission, in “The Federal Trade Commission 2023 Privacy and Data Security Update”, has noted that the country still lacks a comprehensive federal privacy and data security law. Oversight therefore relies heavily on the FTC Act and narrower, sector-specific statutes. In practice, that leaves employers with relatively broad room to manoeuvre in ordinary commercial settings, including many aspects of the employer-employee relationship.

Europe operates according to a markedly different logic, one that places greater weight on proportionality, necessity and rights-based review. The European Union’s “Your Europe” guidance on “Data protection under GDPR” states that when new technologies create high risks to individual rights and freedoms, companies may need to conduct a data protection impact assessment. The European Data Protection Board has repeatedly indicated that systematic monitoring is a high-risk category of data processing. In Europe, therefore, collecting employee clicks, keystrokes and screen interactions at scale would not simply trigger a duty of notice. It would raise broader questions about necessity, data minimisation, legitimate-interest balancing and, in many countries, the role of employee representatives or works councils. Eurofound, in “Employee monitoring: A moving target for regulation” (https://www.eurofound.europa.eu/en/publications/all/employee-monitoring-moving-target-regulation), has likewise observed that workplace monitoring in Europe sits at the intersection of fundamental rights, data protection law and labour law, making it difficult to treat surveillance as a purely technical management tool.

Britain, though no longer part of the European Union, has retained a broadly similar regulatory sensibility. The UK Information Commissioner’s Office, in its guidance on monitoring at work, has made clear that employee monitoring is not unlawful in itself, but it must be lawful, transparent and fair, and it must not intrude on workers’ private sphere in a disproportionate manner. The key issue is not whether a business has a commercial purpose, but whether less intrusive means are available and whether employees clearly understand what is being collected, why it is being collected, how it will be used and how long it will be retained.

Canada offers yet another variation, one that places particular emphasis on trust and workplace culture. The Office of the Privacy Commissioner of Canada, in “Privacy in the Workplace” (https://www.priv.gc.ca/en/privacy-topics/employers-and-employees/02_05_d_17/), states that employers should inform employees about the purpose, nature, extent, reasons and potential consequences of workplace monitoring. Canadian regulators have also warned in recent years that the spread of employee-monitoring technologies has exposed how outdated many legal protections have become in digital workplaces. In other words, the issue is not only whether employers can monitor workers, but how far such monitoring may erode trust, dignity and the basic terms of employment.

Taken together, these jurisdictions reveal a striking divergence. The United States tends to let innovation proceed first and patch gaps later through state law or case-by-case enforcement. Europe is more inclined towards ex ante governance and rights-balancing. Canada occupies a middle ground, seeking clearer transparency rules that preserve managerial flexibility without hollowing out trust. Meta’s decision to start with American staff reflects how AI firms are sequencing deployment according to regulatory tolerance.

This Is Not Merely a Privacy Dispute; It Is a New Way of Pricing Institutions and Efficiency

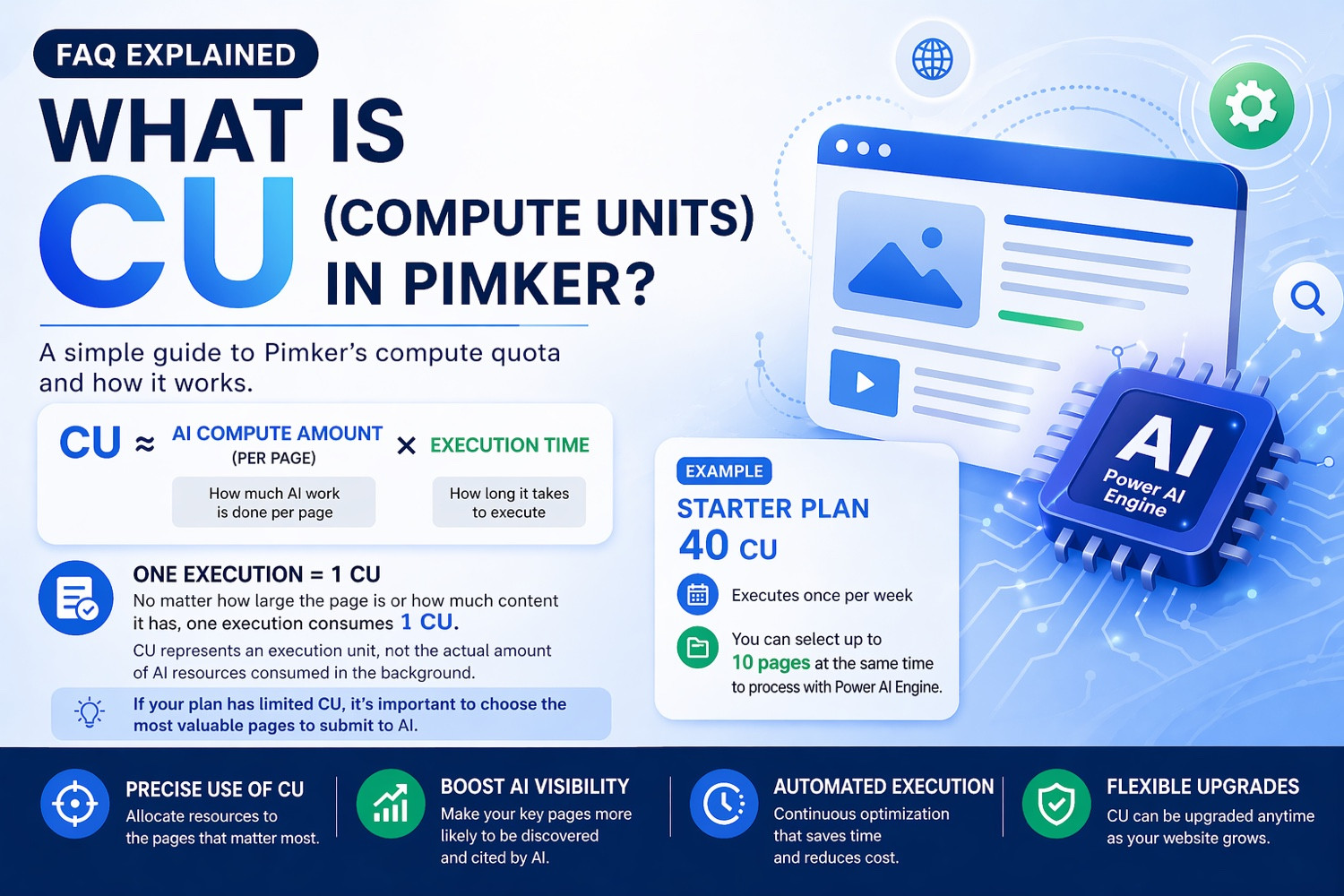

From an institutional perspective, Meta’s move shifts what might once have been seen as a human-resources issue into the centre of AI governance. Once monitoring data is used for model training, it is no longer just a temporary management record. It becomes a reusable and accumulative foundation for technical capability. Its economic significance lies in the fact that observation ceases to serve only managerial control and begins instead to feed directly into capital formation.

That has at least two long-term consequences. First, the causal link between data collection and automation becomes tighter. Companies may argue that data collection is needed to improve productivity, but employees will find it difficult to ignore the other possibility: as models become better at performing routine digital tasks, the importance, scarcity and wage premium associated with some roles may gradually fall. Second, new internal hierarchies are likely to emerge. Workers who design workflows, define tasks and evaluate AI output may become more valuable. Those whose work consists largely of carrying out standardised digital procedures may increasingly be treated as interchangeable, easier to outsource or more vulnerable to functional dilution by AI.

The OECD, in its research on “Using AI in the workplace” and “Algorithmic management in the workplace”, has argued that AI and algorithmic systems can improve efficiency, but may also deepen information asymmetries by concentrating supervision, task allocation, evaluation and workflow design on the side of management and software systems. Seen in that light, Meta’s initiative is less an isolated corporate experiment than an early illustration of a broader production model taking shape in knowledge industries: employees continue doing their jobs while simultaneously providing the behavioural data needed to train systems that may one day replace part of that work.

The Lesson for Taiwan and Asia Is That Technology Often Arrives Before Governance

For Taiwan and much of Asia’s technology sector, this episode has wider relevance than may first appear. In many Asian markets, the regulatory framework linking digital oversight, labour law and AI governance remains underdeveloped. Firms may move quickly to adopt productivity tools, customer-service agents, coding assistants and internal workflow agents, while lagging far behind in answering basic questions about legitimacy. Can employee interaction data be used to train such systems? Is de-identification enough? Do workers have a right to refuse, or at least to make a meaningful choice?

This matters particularly in Taiwan, where both the technology manufacturing sector and the digital-services economy place a high premium on efficiency, delivery and process optimisation. If no institutional line is drawn early, it would not be difficult to imagine similar practices spreading. The problem is that when companies justify expanded data collection in the name of competitiveness, workers rarely have symmetrical bargaining power. This is therefore not only a matter of personal data protection. It also concerns labour law, corporate governance, collective bargaining and industrial policy. If Asian markets do not establish principles of transparency, minimisation, purpose limitation and independent review at an early stage, AI adoption may proceed first along the most convenient managerial path rather than the most balanced institutional one.

The Counter-Argument Is Not Baseless, but the Risk Lies in Exceptional Measures Becoming Routine

To be fair, the corporate argument is not entirely without merit. If AI agents are genuinely expected to carry out complex office tasks, publicly available web data, simulated environments and standard benchmarks are unlikely to be enough. From a technical standpoint, high-quality human demonstration data may be one of the necessary ingredients for moving AI from systems that can talk to systems that can act. Meta’s position rests precisely on that claim: if models are to understand how digital work is done in the real world, they must observe how people actually do it.

Yet the main danger lies not in the existence of that logic, but in its ability to normalise ever-expanding surveillance. Today the company may collect mouse paths and keyboard inputs. Tomorrow it may seek voice interactions, meeting behaviour, task-switching rhythms or cross-platform collaboration data. Today it may insist that the information is not used for performance evaluation. In the future, under cost pressure or changing management priorities, that boundary may prove more fragile. Institutional design matters precisely because corporate assurances are often less durable than technical capability.

In the Age of AI, Firms Are Not Only Raising Efficiency; They Are Rewriting the Labour Contract

Meta’s decision deserves deeper scrutiny because it reveals a wider reality about corporate governance in the age of AI. Productivity gains are no longer coming simply from software that assists workers. They increasingly come from workers first translating their own methods into data that systems can learn from, after which those systems may gradually begin to assume the work themselves. The implicit content of the labour contract changes as a result. What employees provide is no longer just present output. It also includes procedural knowledge that can be absorbed by capital and reused over time.

At a more macro level, this may well be a preview of how knowledge work will be reorganised over the coming decade. Firms will become more aggressive in turning invisible work processes into data, models and platforms. Regulators, meanwhile, will be forced to decide which forms of data collection count as legitimate management and which cross the line into excessive intrusion on privacy, personhood and labour rights. The question of real consequence is not whether one technology company has moved too far, but whether existing legal systems, worker representation and governance structures are capable of drawing meaningful boundaries once more companies begin to treat the way employees work as raw material for AI.

That answer remains unsettled. What is already clear, however, is that the reallocation of power among work, data and capital has only just begun.