AI Software | Editor: Sandy

OpenAI on April 21st 2026 unveiled “ChatGPT Images 2.0”, pushing image generation beyond a showcase of visual flair and closer to a practical production tool. The upgrade is not chiefly about prettier pictures. Instead, OpenAI is explicitly arguing that this generation marks a substantial leap in instruction following, text rendering, multilingual output and support for multiple aspect ratios, with the model now available across ChatGPT, Codex and the API. More strikingly, in thinking mode for paid plans, the system can draw on live web information, gathering context before producing an image. The company’s ambition is therefore larger than improving AI art. It is trying to make AI produce visuals that marketing teams, educators, designers and businesses can use immediately.

From generating images to generating deliverables

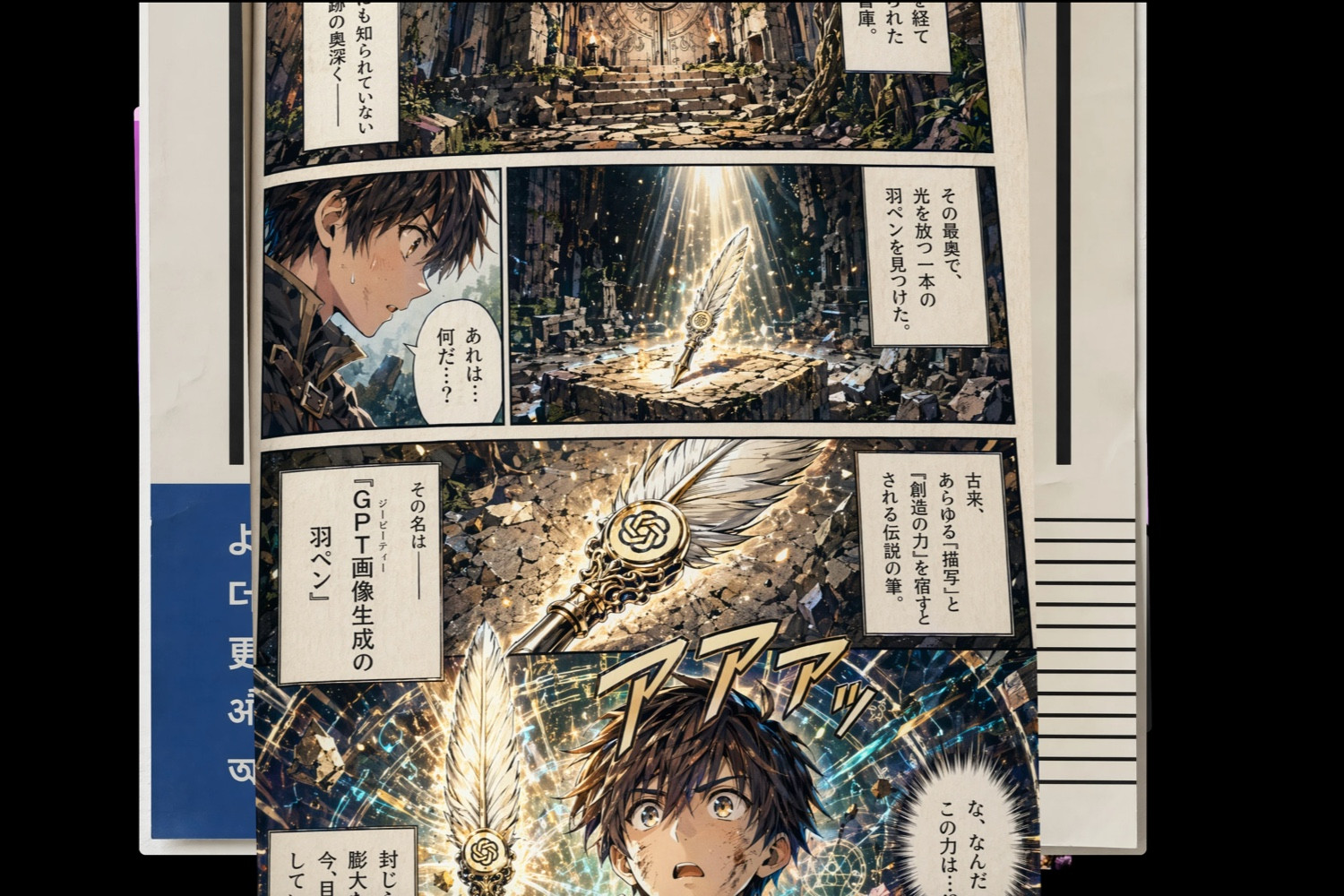

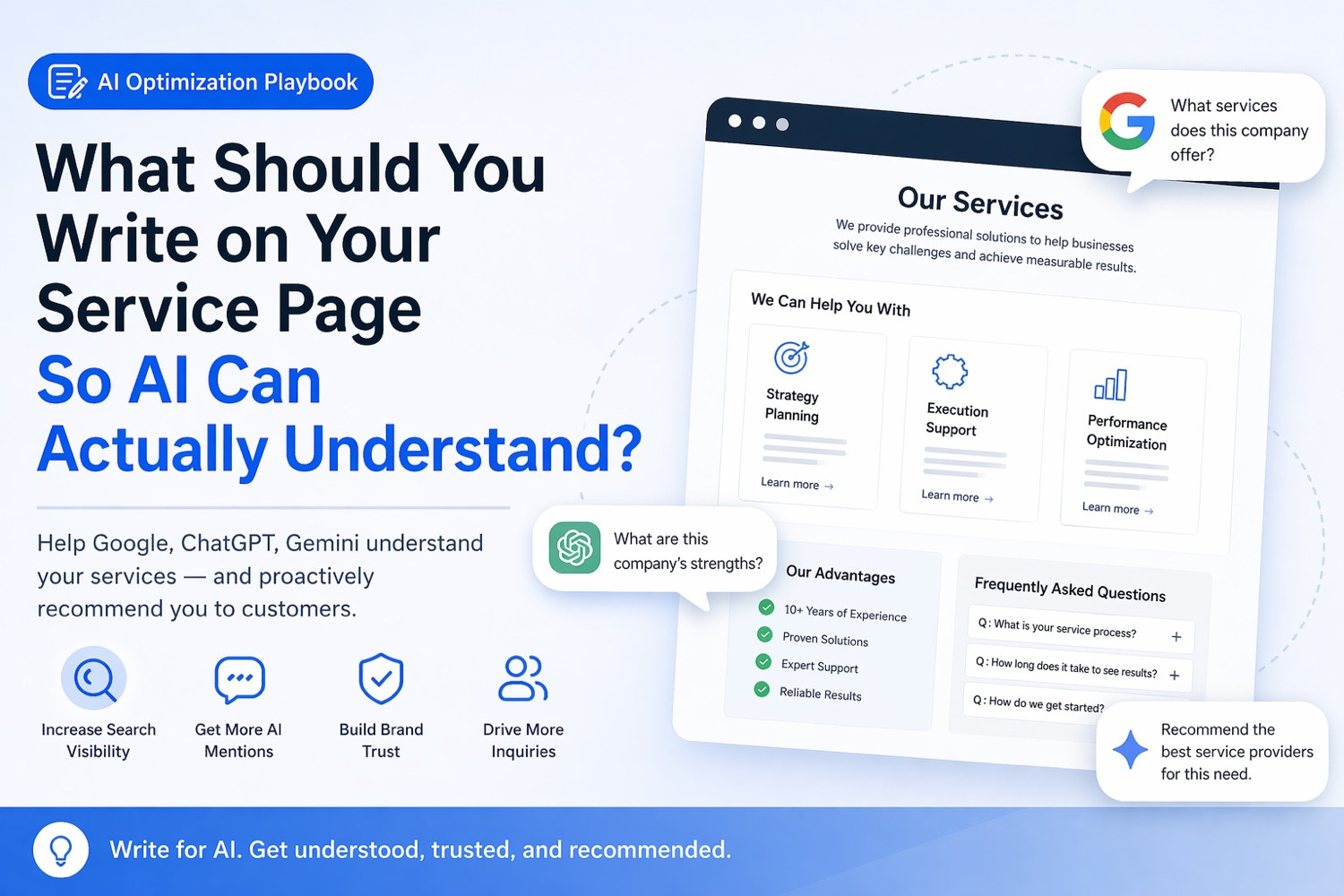

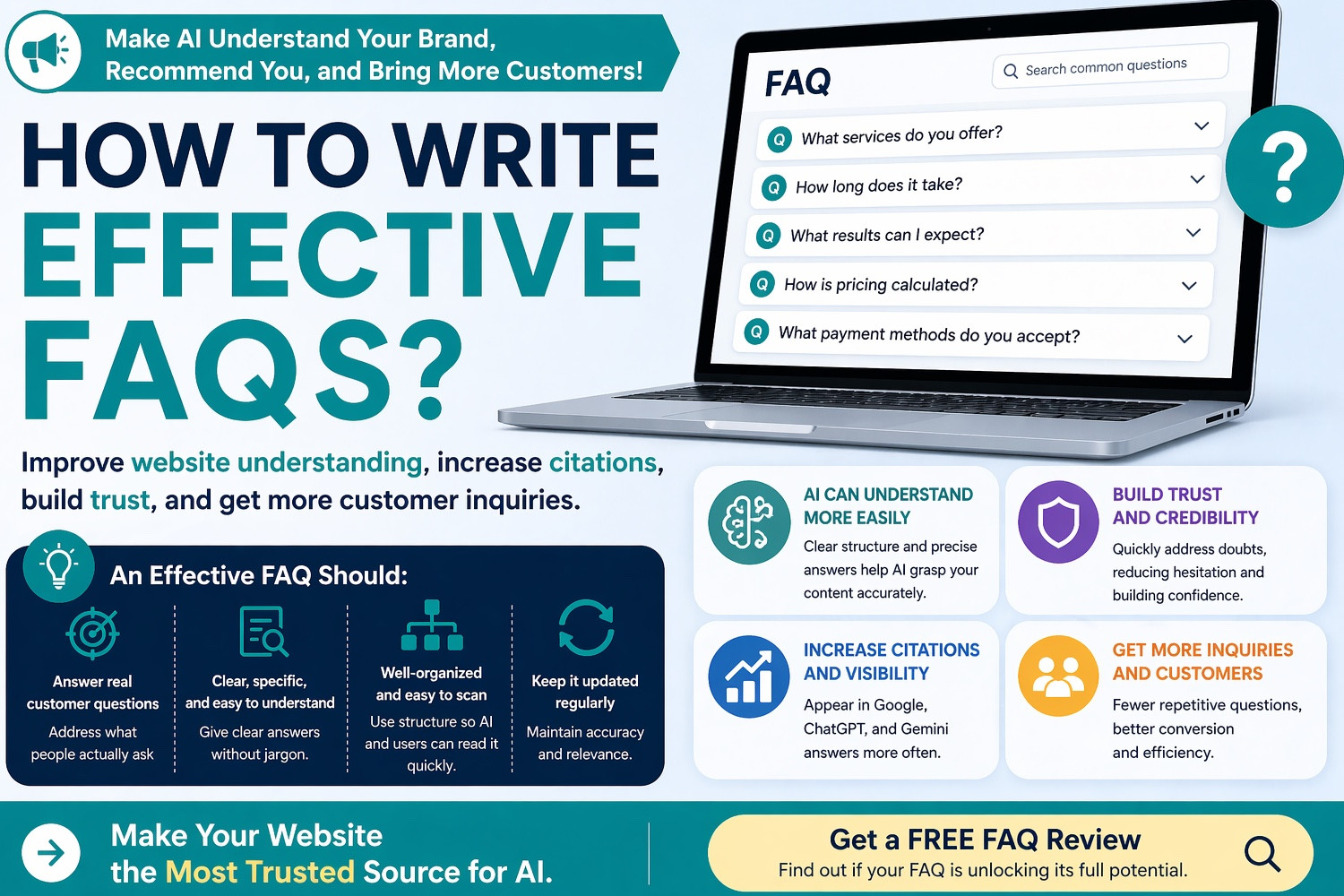

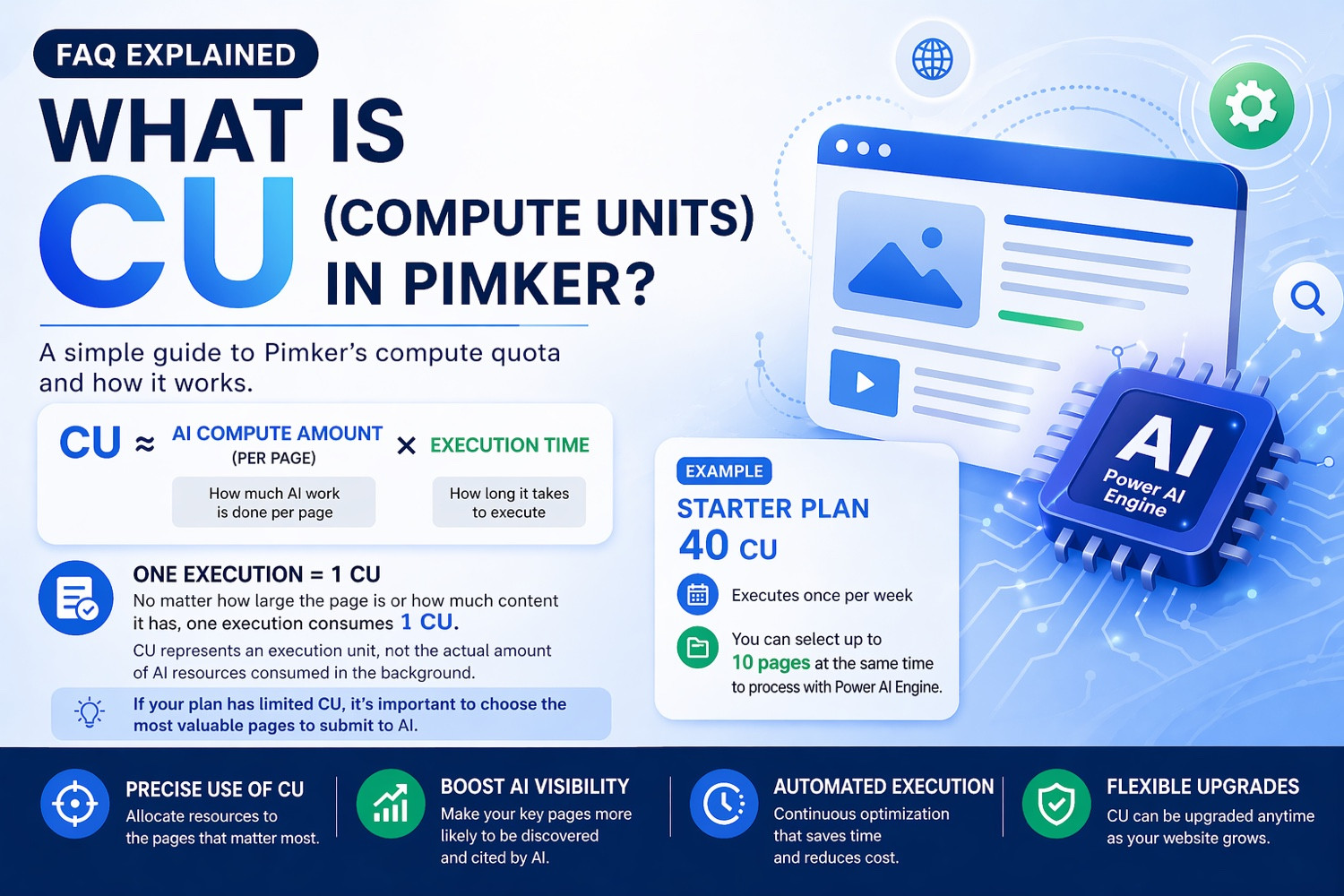

According to OpenAI’s official announcement, “Introducing ChatGPT Images 2.0” (https://openai.com/index/introducing-chatgpt-images-2-0/), the new model is designed around greater precision and control. In practice, that improvement is concentrated in several areas that have long frustrated image-generation systems. First, text is more legible and better able to hold its place within a layout. Second, the model appears more reliable when asked to produce posters, infographics, comic panels, educational materials and branded visuals, all of which are dense with typography. Third, output is no longer confined to the familiar square image but more naturally supports horizontal, vertical and elongated formats, which matters for social platforms, presentations, adverts and e-commerce pages alike.

That sets ChatGPT Images 2.0 apart from an earlier wave of image tools whose main strength lay in producing eye-catching atmospherics, stylised illustrations or photorealistic scenes. Those systems were often impressive until a task required a readable headline, a correctly labelled map, a coherent brand hierarchy or a complete page structure. At that point, a human designer usually had to rebuild the result from scratch. What OpenAI is trying to close is precisely that gap between “inspirational draft” and “usable asset”.

The technical advance lies in text, context and layout control

The most notable technical feature of the release is that OpenAI no longer treats image generation merely as pixel prediction. It is positioning the system more like a multimodal model that can understand content intent, layout logic and contextual constraints. According to OpenAI’s documentation for “GPT Image 2 Model” and “Image generation” on its developer platform (https://developers.openai.com/api/docs/models/gpt-image-2; https://developers.openai.com/api/docs/guides/image-generation), gpt-image-2 is presented as the company’s most advanced image model, supporting flexible sizes, high-fidelity image input, generation and editing through the API. In other words, this is no longer just a text-to-image engine. It is intended to function as a foundational capability that can be embedded in commercial workflows, publishing pipelines and software products.

A closer reading of OpenAI’s examples suggests that the hardest problem it is trying to solve is “structured visual output”. Whether the task is an infographic, a teaching poster, a comic page, a set of study notes or a book cover, the model must handle semantics, typography, composition, hierarchy and narrative continuity all at once. That is a far more difficult problem than producing a single beautiful illustration. It requires the system not only to generate an image but to understand what information should stand out, which elements must remain consistent and how multiple writing systems can coexist in the same frame.

OpenAI’s emphasis on multilingual text rendering also carries obvious commercial significance. Image models have long struggled outside English, especially in Chinese, Japanese, Korean, Arabic and South Asian languages, where they often produce misspellings, warped glyphs or unstable layouts. This is not just a quality issue. It directly affects whether a system can be deployed globally. If AI can reliably serve only English-speaking markets, it cannot truly become an international product. Improvements in this area suggest that OpenAI has shifted its focus from whether a model can generate images to whether it can be rolled out worldwide.

Thinking mode hints at more than a feature upgrade

A more strategic element of the launch is the integration between image generation and thinking mode. According to OpenAI’s official materials, including release notes and support pages on image generation in ChatGPT, ChatGPT Images 2.0 is available across ChatGPT plans, while the more deliberate, reasoning-led generation experience is initially reserved for paid users, allowing the system to spend longer planning and refining outputs and, in certain cases, to use live web information. That points to a larger product direction. OpenAI is trying to turn image generation from a one-shot feature into an agentic creative workflow that can research, structure, decide and then render.

The commercial implications are significant. Earlier AI imaging tools behaved like powerful generation engines: a user entered a prompt, received several candidate outputs and handled the rest manually. But if a model can first understand a brief, then search for current information, and finally generate a polished output containing text, graphics, messaging and layout structure, it starts to encroach on presentation design, advertising production, educational publishing, brand-asset creation and even the day-to-day content operations of small businesses. Seen from that angle, OpenAI is not merely selling a better image model. It is extending ChatGPT’s claim to be a broader productivity platform.

Among American rivals, the race is shifting from image quality to workflow integration

In international context, OpenAI’s move is not an isolated event but part of a wider change across the generative-media industry. Google had already signalled a similar direction. In its official post, “Fuel your creativity with new generative media models and tools” (https://blog.google/innovation-and-ai/products/generative-media-models-io-2025/), the firm introduced Imagen 4 with an emphasis on stronger detail, improved spelling and typography, up to 2K resolution and support for multiple aspect ratios. That suggests that “better text, more layouts, more print-ready outputs” is becoming a mainstream competitive benchmark, rather than a niche feature.

Yet Google’s strategy differs in emphasis. It tends to fold image capabilities into Gemini and a broader ecosystem of consumer services. In its post “New ways to create personalized images in the Gemini app” (https://blog.google/innovation-and-ai/products/gemini-app/personal-intelligence-nano-banana/), Google highlights personalisation and ties to Google Photos, underlining consumer context and service integration. OpenAI, by contrast, is framing this release more explicitly around workflow efficiency from prompt to finished visual asset. Its target audience seems closer to professional content creators, knowledge workers and developers.

Adobe, another American contender, represents a third path. According to the company’s official announcement, “Adobe Ushers in a New Era of Creativity with...” (https://news.adobe.com/news/2026/04/adobe-new-creative-agent), Firefly is being extended with new image and video editing capabilities and broader model partnerships inside an existing suite of creative tools. Adobe’s advantage has never chiefly been winning model leaderboards. It lies in its deep integration with Photoshop, Illustrator, Premiere and enterprise marketing systems, along with its longstanding emphasis on commercial safety and licensing clarity. If OpenAI represents an AI-native creative platform, Adobe remains the AI-upgraded version of the existing creative industry’s infrastructure. The contest between them is therefore not merely about output quality, but about who can embed most effectively in real business workflows.

Chinese firms are accelerating, and the contest no longer belongs to Silicon Valley alone

Viewed from China, the competitive picture is equally intense. Alibaba, in its official post “Alibaba Unveils Wan2.7 Redefining Personalized and Precision Image Creation” (https://www.alibabacloud.com/blog/alibaba-unveils-wan2-7-redefining-personalized-and-precision-image-creation_602995), presents Wan2.7-Image as a unified generation-and-editing model centred on fidelity, personalisation and professional-grade control. ByteDance, meanwhile, in its product page “Seedream 5.0 Lite” and its technical post “Introducing Seedream 5.0 Lite” (https://seed.bytedance.com/en/seedream5_0_lite; https://seed.bytedance.com/en/blog/deeper-thinking-more-accurate-generation-introducing-seedream-5-0-lite), explicitly ties image generation to deeper reasoning and online search capabilities. This matters because it shows that China’s leading model providers are also moving toward the same convergence of generation, thinking and information retrieval.

That is worth close attention. It suggests that the global race in image AI is converging on a common destination. The contest is no longer about who can produce the most photorealistic face or the most dreamlike landscape. It is about who can best understand a task, handle information-dense scenarios, support multiple languages and formats and connect smoothly to tools and services. Chinese markets add a particular twist: commercial pressure is often sharper, and platforms are generally quicker to embed models into e-commerce, advertising, creator platforms and enterprise software. OpenAI’s release may come from America, but some of its most formidable competitors are not in California. They are in Hangzhou and Beijing.

Europe and the open-model camp provide a different kind of pressure

Beyond America and China, Europe and the open-model ecosystem add another layer of competitive pressure. Germany’s Black Forest Labs has continued to advance its FLUX line, stressing faster interactive visual intelligence and stronger controllability on its official channels. Firms like these may not rival ChatGPT in consumer reach, but they appeal to developers and businesses for different reasons: more flexible deployment, clearer boundaries and, in some cases, a closer fit with customisable or local infrastructure. For OpenAI, that means competition is not only about feature comparison. It also involves platform lock-in, pricing models and the battle for developer allegiance.

The deeper industrial impact is a rewriting of the content-production chain

The significance of ChatGPT Images 2.0 should not be reduced to “another stronger image model”. Its more important effect may be to rewrite how visual content is produced. A single marketing poster, an educational visual or a set of social-media graphics has traditionally required repeated collaboration between copywriters, designers, researchers and project managers. If a model can move from a written brief to output that already contains headline, subheading, layout, chart-like structure and multiple size variants, the workflow for small firms, educators and content teams could compress dramatically.

That does not necessarily mean designers disappear. It more likely means their role changes. Human value may shift away from manually assembling every asset and toward defining brand systems, reviewing quality, deciding narrative direction and correcting high-risk details. In that new division of labour, AI does not eliminate all creative work. It absorbs a large share of the repetitive, template-driven and draft-oriented tasks. That would carry long-term consequences for freelancers, agencies, platform content teams and e-commerce operators.

Several barriers remain, and one upgrade does not remove them

For all the ambition of the release, several constraints remain obvious. Text rendering may be better, but once these tools enter commercial and educational settings, the tolerance for error becomes far smaller than in carefully curated demo images. A single misspelling on a poster, a wrongly labelled map, or a distorted figure in a medical or financial graphic could create real risk. Moreover, integrating thinking mode with live search introduces its own problems of sourcing, factual accuracy and accountability. Once a model is not merely “drawing” but automatically gathering information and packaging it into a publishable visual, mistakes can emerge in a far more persuasive form.

There is also a business-model challenge. OpenAI has made the product available through ChatGPT, Codex and the API, but different users want different things: enterprises care about scale and integration, creators care about style control and rights clarity, while developers care about reliability and cost. Satisfying all three with a single platform will require more than strong benchmark performance.

A larger platform war is only beginning to take shape

From a longer-term perspective, the most important part of ChatGPT Images 2.0 is what it reveals about the future of AI platforms. Image generation is ceasing to be a standalone feature and becoming a key interface inside larger multimodal systems. Text, images, search, reasoning, code and workflow orchestration are being bundled into a single experience. Whoever can connect those capabilities most smoothly is more likely to control the next gateway to content production.

This release is therefore a declaration that image generation is no longer a decorative extra inside a chatbot. It is part of ChatGPT’s broader platform strategy. For Google, that will increase pressure to tighten the integration between Gemini and its media models. For Adobe, it raises the urgency of combining professional creative tools with more agentic workflows. For Chinese model providers, it reinforces the need to build image systems that are more task-aware, more commercially deployable and more deeply adapted to local contexts. The next phase of image AI may appear to be about who can create the most human-looking work. In reality, it is about who can become the least replaceable layer in the daily workflow of businesses and creators.

OpenAI describes this upgrade as the start of a new era for image generation. That may still carry the tone of a product launch. But viewed through the lenses of global competition, workflow redesign and platform consolidation, the company is at least pointing to something more concrete: the battlefield in AI imagery is shifting from whether a model can draw to whether its output can be used, trusted and deployed at scale. The consequences of that shift are only beginning to surface.